In the Part 1 of this series, we talked about upcoming feature detection algorithms in ArrayFire library. In this post we show case some of the preliminary results of Feature Description and matching that are under development in the ArrayFire library. Feature description is done using the ORB feature descriptor[1]. The descriptors are matched against a database of features using Hamming distance as the metric.

The results we show in this blog use the same hardware and software used in the previous blog:

- Intel Sandy Bridge Xeon processor with 32 cores (for baseline OpenCV CPU implementation)

- NVIDIA Tesla K20C (for OpenCV and ArrayFire CUDA implementations)

- ArrayFire development version

- OpenCV version 2.4.9

Feature Description and Matching Benchmarks

In Part 1 we showed that feature detection with FAST[2] scales with respect to to the number of features detected. While ORB uses FAST for the feature detection step, it performs additional computations to calculate Harris Corner scores as well as orientation of the features[3]. This results in ORB scaling with the number pixels (meaning it is not bottlenecked by FAST).

The Hamming matcher benchmarks are shown below. For benchmarking purposes, we used a set of 500 descriptors as test descriptors (e.g., number of descriptors extracted from an image), being matched to a database containing 500 to 5 million descriptors, each step increasing by an order of magnitude.

Descriptor Matching Samples

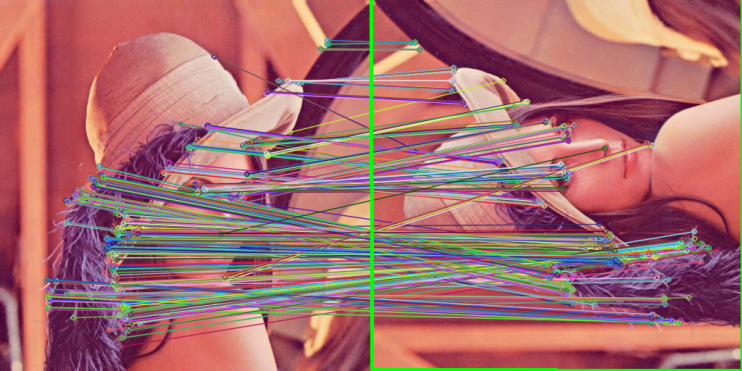

In this section we show an image matching of the Lena image with a 90 degrees rotation of Lena. The top set uses ArrayFire and the bottom set uses OpenCV. There is also a green rectangle around the matched pictures, that indicated the homography of the detected object.

ORB with ArrayFire, 21.6X speedup over CPU code.

ORB with OpenCV for CUDA, 16.4X speedup over CPU code.

Discussion

We see that there is a clear advantage using GPUs over CPUs when it comes to processing time, as stated before in Part 1. By using GPUs, it is possible to do descriptor extraction more than 20 times faster than with a CPU. Similarly, we can achieve average speedup of about 25 times for descriptor matching using hamming distance.

These are preliminary values. This function is still under development. Please inquire with technical@arrayfire.com if you are interested in trying out our upcoming Computer Vision functions in ArrayFire.

References

[1] Rosten, Edward, and Tom Drummond. “Machine learning for high-speed corner detection.” Computer Vision–ECCV 2006. Springer Berlin Heidelberg, 2006. 430-443.[2] Rublee, Ethan, et al. “ORB: an efficient alternative to SIFT or SURF.” Computer Vision (ICCV), 2011 IEEE International Conference on. IEEE, 2011.

[3] http://docs.opencv.org/trunk/doc/py_tutorials/py_feature2d/py_orb/py_orb.html

Comments 2

Pingback: Computer Vision in ArrayFire – Part 2: Feature Description and Matching | Atlanta Tech Blogs

Pingback: New Features Coming to ArrayFire | ArrayFire