This morning, I woke up to find the following comment in the MATLAB® Newsgroup:

Over two years ago, MathWorks® started to build a clone of Jacket, which you now know as the GPU computing support in the Parallel Computing Toolbox (TM). At the time, there were many naysayers suggesting that Jacket would somehow be eclipsed by the clone. Made sense, right?

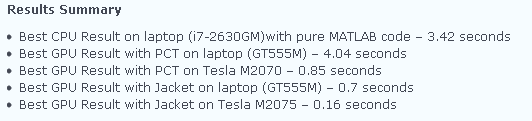

Wrong! Here we are 2 years later and the clone is still a poor imitation. There are several technical reasons for this, but if you are serious about getting great performance from your GPU, Jacket is the better option. Look at all the real customers that are getting big benefit. Here are some other recent benchmarks from the Walking Randomly Blog that show Jacket on a laptop is faster than PCT (TM) on a Tesla:

If it were easy to imitate Jacket, then MathWorks® would have siphoned away all the Jacket users. The truth is that it is not easy to build great GPU software, and the Jacket user base continues to explode. Jacket is not only better than PCT (TM) but is also getting better at a faster rate. Here’s to another 2, 5, and 10+ years of great speeds for all of you Jacket programmers!

To Juliette and others out there, if you really want PCT (TM) to get better, you might consider asking MathWorks® to spending less time cloning and more time working with others who are adding value to the MATLAB® ecosystem.

Comments 6

If I understand well your final sentence. MathWorks is not willing to cooperate witch you and add jacket to the standard MathWorks toolbox packages portfolio?

There are many ways to potentially cooperate. Thus far, MathWorks has chosen to ignore any options which would actually benefit the scientists, engineers, and analysts writing M-code.

OK … and you are asking the MATLAB users to ask MathWorks to spending less time cloning and more time working with others who are adding value to the MATLAB® ecosystem? Are you sure that this kind of feedback has any sense?

I think this is not good idea, because this kind of request is extremely vague.

Sorry if this is confusing. In most companies, feedback from customers is very beneficial in shaping the company’s behavior (for instance, we strive to develop things customers want here at AccelerEyes – like our recent OpenCL announcements).

Yes I understand what is your main intention, but could you imagine how will be MathWorks react on users recommendation like: “Hi, I am a MATLAB user. Could you be so kind and spend less time cloning and more time working with others, who are adding value to the MATLAB® ecosystem?”

If you really need some help from users like me, let as know what exactly is important tell to MathWorks, to be more cooperative and less competitive with others, like Accelereyes.

Mathworks should just license Jacket and cut down on it’s development cost and still provide a superior product